At GTC 2026, Nvidia laid out how it wants to stay ahead in AI beyond the first wave of generative models. Jensen Huang introduced the Groq 3 inference processor, part of the Rubin platform and designed to push more tokens per watt for large language models, alongside the new Vera CPU systems that target AI agent workloads and expand Nvidia’s role beyond GPUs. He also highlighted the Vera Rubin Space Module concept for orbital data centers and new software like NemoClaw for AI agents, and raised Nvidia’s estimate of addressable AI chip demand to about 1 trillion dollars through 2027, roughly double last year’s 500 billion view through 2026.

On the commercial side, Nvidia is deepening ties with hyperscale buyers. AI cloud provider Nebius signed a five year deal with Meta worth up to 27 billion dollars, built around one of the first large scale deployments of the Vera Rubin platform, with 12 billion dollars of dedicated capacity and up to 15 billion in additional compute Meta can claim if Nebius does not sell it to other customers. At the same time, reports suggest Meta is weighing layoffs of around 20 percent of its workforce to help fund an AI infrastructure budget that could reach 600 billion dollars by 2028, underlining the double edged nature of this boom: huge orders for Nvidia and its partners, but intense efficiency pressure on the buyers footing the bill.

Groq 3 and five new racks: how Nvidia is putting together an AI datacentre

A key product innovation is Groq 3, a dedicated chip for inference, i.e. running AI models in production. Nvidia's $NVDA follows it up with an acqui-hire: it struck a licensing deal with Groq and poached founder Jonathan Ross, president Sunny Madra and other key people in a roughly $20 billion package. Groq 3 complements Nvidia's classic GPUs so that the company has a dedicated inference chip for a time when the market's center of gravity is shifting from training to deployment models.

In parallel, Nvidia unveiled five new server racks based on the Rubin platform. The latter combines six key chips - Vera CPU, Rubin GPU, NVLink 6, ConnectX, BlueField and Spectrum 6 - to reduce training and inference costs by up to a tenth compared to the previous Blackwell generation, thanks to greater efficiency and fewer GPUs per system. From an investor's perspective, this means Nvidia wants to be not just a supplier of individual chips, but the architect of an entire AI datacenter, which strengthens its negotiating position with both clouds and hyperscalers.

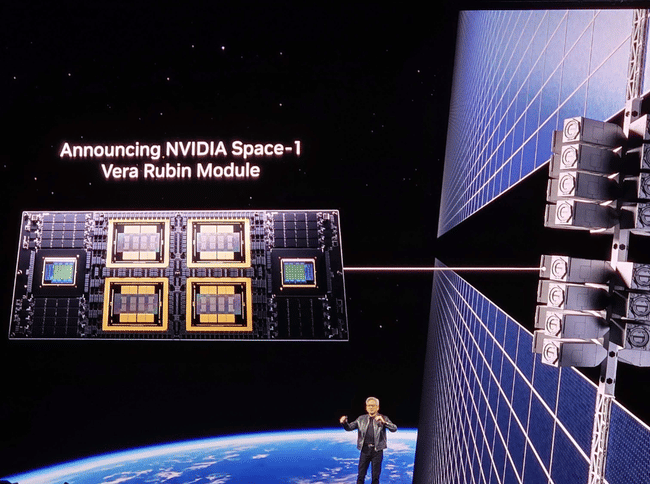

Space as the next frontier: the Vera Rubin Space Module

GTC also brought a symbolic innovation: Vera Rubin Space Module, a modular platform for on-orbit datacenters, geospatial intelligence and autonomous operations in space. Nvidia is building on the success of startup Starcloud, which brought the H100 GPU into orbit in 2025 and launched Google Gemini and NanoGPT-based models on it.

According to Nvidia, the Ruby GPU is expected to offer up to 25 times more AI performance for "space-based inference" compared to the H100, paving the way for real-world processing of large volumes of data directly on satellites. The Vera Rubin Space Module platform will also be complemented by IGX Thor and Jetson Orin systems for other types of orbital tasks. From a business perspective, this is not a high-volume segment like traditional datacenters in the short term, but a high-margin, technologically prestigious showcase that reinforces Nvidia's brand in the space and geointelligence industry.

AI agents, NemoClaw and the fight for "desktop"

At the software level, Nvidia announced NemoClaw, a security and control layer for the OpenClaw platform that enables AI agents to operate on users' desktops. Originally listed as Clawd, then renamed Moltbot and finally OpenClaw, OpenClaw allows agents to operate over various AI models and act on behalf of the user in WhatsApp, Discord, Slack and other applications.

The problem with OpenClaw is privacy and security concerns, as the agent can control the computer and access personal data. NemoClaw aims to address these concerns by adding a set of tools for rights management, auditing and security sandboxes. Nvidia is also positioning its GeForce RTX, RTX Pro Station, DGX Station and DGX Spark platforms as the preferred hardware to run these agents, bridging the consumer and professional segments.

Uber, Lyft and others: AI chips as the brains of robotaxi networks

There was also news from the autonomous mobility sector at GTC. Nvidia announced that Uber will begin deploying a fleet ofLevel 4 autonomy vehicles based on its Drive Hyperion platform in Los Angeles and San Francisco in 2027. This is a follow-up to a previous agreement to acquire up to 100,000 vehicles on the platform, this time with specific timelines and locations.

In addition to Uber, Lyft, Bolt and Grab are also using Nvidia's systems for their self-driving projects. From Nvidia's perspective, it's further confirmation that its chips and software are making inroads into autonomous transportation, diversifying revenue beyond traditional datacenters while creating a long-term chip business directly in the automotive sector.

Nebius - Meta: 27 billion contract as an advertisement for Vera Rubin

The biggest financial number around GTC came not directly from Nvidia, but from its ecosystem. Cloud partner Nebius announced a five-year deal with Meta Platforms for up to $27 billion to deliver AI infrastructure based on Vera Rubin. The structure of the contract is two-tiered:

$12 billion of firmly contracted dedicated capacity across multiple sites.

Up to $15 billion of optional capacity, which Nebius initially offers to other customers and Meta will buy if it remains unused.

Nvidia has announced a $2 billion investment in Nebius, underscoring its interest in developing the partner and using it as a showcase for Ruby. At the same time, Meta's AI strategies talk about possible AI CAPEX of up to $135 billion in 2026, so the Nebius deal is just part of a broader move to an "asset-light" model of buying capacity. What's important to Nvidia investors is that Rubin is getting into large production deployments in the first wave, and that Nvidia is participating in this both through chip supply and through an equity stake in Nebius.

How big can Nvidia's contribution to growth be

Huang's new outlook of $1 trillion in AI chip demand by 2027 builds on last year's figure of $500 billion for the period through 2026. The company also revealed in its Q4 results for fiscal year 2026 that data center revenue reached $62.3 billion in the quarter and accounted for over 90% of total revenue. GTC 2026 is meant to signal to investors that growth is not just about one generation of GPUs, but the entire product and software layer from data center to automotive to edge and orbit.

The contribution of each news item can be summarized as follows:

Groq 3 and Ruby ensure that Nvidia has the hardware for the next phase of AI (agent systems, massive inference) and can continue to push the price per token down.

NemoClaw and OpenClaw open up a new area of software revenue and strengthen the link to end users and businesses.

Uber and other transportation partners are expanding Nvidia's presence in the auto sector.

Vera Rubin Space Module shows new segments with potentially high added value.

Nebius - Meta contract demonstrates that big players are willing to tie tens of billions of dollars to infrastructure based on Nvidia's new platform.