Microsoft is shifting from being “the OpenAI company” to a two‑track AI strategy. The group has put Mustafa Suleyman in charge of a dedicated Microsoft AI organization and now openly targets having its own state‑of‑the‑art large models for text, image and audio by 2027, competitive with the very best systems on the market. Freed from older limits in the deal that discouraged it from pursuing AGI on its own, Microsoft is pouring tens of billions into AI‑tuned data centers and a frontier‑model team so that Copilot and Azure don’t depend forever on a single outside lab.

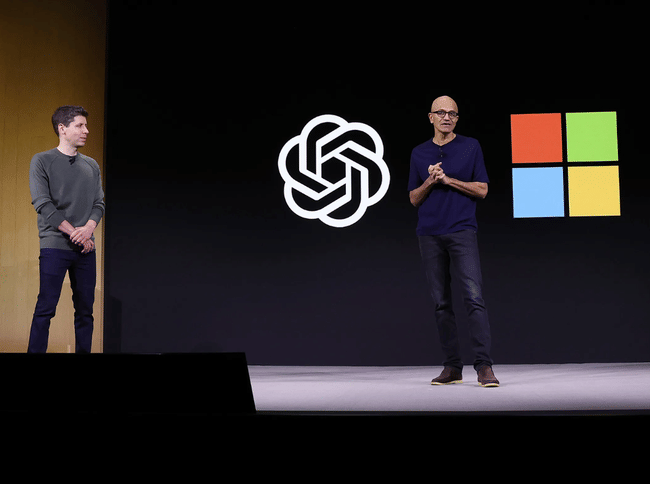

That does not mean a divorce from OpenAI. A revised partnership struck in October 2025 gives Microsoft roughly a 20–30% economic stake in OpenAI, long‑term access to its IP and models through 2032 and an enormous commitment that OpenAI will buy about 250 billion dollars of Azure cloud capacity over time, making it one of Microsoft’s largest anchor customers. The pact was intentionally designed to let both sides pursue independent opportunities, so Microsoft can build its own frontier models while still treating OpenAI as its primary external model partner – a hedge that spreads technical risk, locks in cloud revenue and keeps the company central to the next wave of AI, no matter which lab is ahead at any given moment.

A new arrangement with OpenAI: less dependence, more freedom

The new agreement rewrites the existing relationship. Microsoft retains a significant minority stake in OpenAI and access to its models, but is no longer the "mandatory" exclusive provider of computing power. Three points are crucial:

Microsoft $MSFT has licensing rights to use OpenAI's models and products until 2032, including those that eventually arise after general AI is achieved.

OpenAI has committed to take a huge bundle of Azure services in the coming years, guaranteeing Microsoft long-term cloud revenue.

Both parties have explicitly freed up their hands to develop their own, competing models - so Microsoft is no longer just "packaging" OpenAI's technologies into its products, but can compete for the cutting edge of AI itself.

This is what Suleyman identifies as a key prerequisite: making sure the partnership with OpenAI remains, but also having the contractually confirmed right to go its own way if it is strategically advantageous.

First custom models: speech transcription, voice and images

The most obvious proof that this is not just about marketing is the newly introduced speech-to-text model. Microsoft claims that its system achieves a significantly lower error rate than the previously commonly used solutions from OpenAI and other competitors, while running at lower computing power requirements. This is important for anyone looking to transcribe calls in bulk - from customer service lines to media houses.

Alongside this, the company has also released its own voice and image production models for widespread commercial use. This makes it clear that it doesn't want to depend solely on external partners in these segments. From the point of view of users of Microsoft 365, Azure and other products, this means that it is no longer just OpenAI running "under the hood", but increasingly pure Microsoft technologies.

Huge infrastructure investments: those who want their own models must have their own "power plant"

In order to compete realistically with the biggest players, Microsoft needs not only researchers, but more importantly computing power and energy. That's why the company is significantly increasing its investment in data centres and GPUs on which the big models are trained. In fiscal year 2026 alone, more than $120 billion is expected to go into AI infrastructure.

Meanwhile, Microsoft boss Satya Nadella has long said that the race for AI is not just about the number of chips, but also their efficient use and the cost of energy. That's why Microsoft is also investing in optimising its models to do more work with less computing resources. This doesn't just apply to training, but more importantly to day-to-day operations - the cheaper a single model query is, the easier it is for AI to pay for itself in real-world applications.

The partnership continues, but the AI strategy is expanding

Despite all the changes, Microsoft is publicly presenting the partnership with OpenAI as strong and long-term. OpenAI's products continue to run on the Azure cloud and Microsoft is deeply integrated into their offerings. The difference is that the bosses in Redmond no longer want the company's entire AI story to stand on OpenAI.

Rather, the new direction is "multi-model": the OpenAI models, Microsoft's own models and third-party models can run side by side in a single service. The company is going to make decisions based on which system is best suited to the task at hand - sometimes it will be high-end, general-purpose models, sometimes it will be specialized, cheaper, faster variants.

AI across products: Copilot as a gateway

At the same time, Microsoft continues to push AI across its entire portfolio. Copilot in Office, Windows, and other applications is becoming a major face of the new strategy. It's gradually transforming from the original "assistant" to a tool that can proactively perform tasks and work across services - for example, find needed documents, prepare analytics, or automatically resolve simple customer requests.

So far, it's a minority of users who are paying extra for Copilot, but this is where Microsoft sees huge room for growth. Every extra percentage point in adoption among the hundreds of millions of Microsoft 365 users means billions in additional revenue per year. The combination of proprietary models, a strong cloud, and deep integration into everyday tools gives the company a chance to be not just an "AI component vendor" but the main interface through which businesses and individuals interact with AI.